What’s New¶

These are new features and improvements of note in each release.

v0.14.0 (May 31 , 2014)¶

This is a major release from 0.13.1 and includes a small number of API changes, several new features, enhancements, and performance improvements along with a large number of bug fixes. We recommend that all users upgrade to this version.

- Highlights include:

- Officially support Python 3.4

- SQL interfaces updated to use sqlalchemy, See Here.

- Display interface changes, See Here

- MultiIndexing Using Slicers, See Here.

- Ability to join a singly-indexed DataFrame with a multi-indexed DataFrame, see Here

- More consistency in groupby results and more flexible groupby specifications, See Here

- Holiday calendars are now supported in CustomBusinessDay, see Here

- Several improvements in plotting functions, including: hexbin, area and pie plots, see Here.

- Performance doc section on I/O operations, See Here

- Other Enhancements

- API Changes

- Text Parsing API Changes

- Groupby API Changes

- Performance Improvements

- Prior Deprecations

- Deprecations

- Known Issues

- Bug Fixes

Warning

In 0.14.0 all NDFrame based containers have undergone significant internal refactoring. Before that each block of homogeneous data had its own labels and extra care was necessary to keep those in sync with the parent container’s labels. This should not have any visible user/API behavior changes (GH6745)

API changes¶

read_excel uses 0 as the default sheet (GH6573)

iloc will now accept out-of-bounds indexers for slices, e.g. a value that exceeds the length of the object being indexed. These will be excluded. This will make pandas conform more with python/numpy indexing of out-of-bounds values. A single indexer that is out-of-bounds and drops the dimensions of the object will still raise IndexError (GH6296, GH6299). This could result in an empty axis (e.g. an empty DataFrame being returned)

In [1]: dfl = DataFrame(np.random.randn(5,2),columns=list('AB')) In [2]: dfl Out[2]: A B 0 1.474071 -0.064034 1 -1.282782 0.781836 2 -1.071357 0.441153 3 2.353925 0.583787 4 0.221471 -0.744471 In [3]: dfl.iloc[:,2:3] Out[3]: Empty DataFrame Columns: [] Index: [0, 1, 2, 3, 4] In [4]: dfl.iloc[:,1:3] Out[4]: B 0 -0.064034 1 0.781836 2 0.441153 3 0.583787 4 -0.744471 In [5]: dfl.iloc[4:6] Out[5]: A B 4 0.221471 -0.744471

These are out-of-bounds selections

dfl.iloc[[4,5,6]] IndexError: positional indexers are out-of-bounds dfl.iloc[:,4] IndexError: single positional indexer is out-of-bounds

Slicing with negative start, stop & step values handles corner cases better (GH6531):

- df.iloc[:-len(df)] is now empty

- df.iloc[len(df)::-1] now enumerates all elements in reverse

The DataFrame.interpolate() keyword downcast default has been changed from infer to None. This is to preseve the original dtype unless explicitly requested otherwise (GH6290).

When converting a dataframe to HTML it used to return Empty DataFrame. This special case has been removed, instead a header with the column names is returned (GH6062).

Series and Index now internall share more common operations, e.g. factorize(),nunique(),value_counts() are now supported on Index types as well. The Series.weekday property from is removed from Series for API consistency. Using a DatetimeIndex/PeriodIndex method on a Series will now raise a TypeError. (GH4551, GH4056, GH5519, GH6380, GH7206).

Add is_month_start, is_month_end, is_quarter_start, is_quarter_end, is_year_start, is_year_end accessors for DateTimeIndex / Timestamp which return a boolean array of whether the timestamp(s) are at the start/end of the month/quarter/year defined by the frequency of the DateTimeIndex / Timestamp (GH4565, GH6998)

Local variable usage has changed in pandas.eval()/DataFrame.eval()/DataFrame.query() (GH5987). For the DataFrame methods, two things have changed

- Column names are now given precedence over locals

- Local variables must be referred to explicitly. This means that even if you have a local variable that is not a column you must still refer to it with the '@' prefix.

- You can have an expression like df.query('@a < a') with no complaints from pandas about ambiguity of the name a.

- The top-level pandas.eval() function does not allow you use the '@' prefix and provides you with an error message telling you so.

- NameResolutionError was removed because it isn’t necessary anymore.

Define and document the order of column vs index names in query/eval (GH6676)

concat will now concatenate mixed Series and DataFrames using the Series name or numbering columns as needed (GH2385). See the docs

Slicing and advanced/boolean indexing operations on Index classes as well as Index.delete() and Index.drop() methods will no longer change the type of the resulting index (GH6440, GH7040)

In [6]: i = pd.Index([1, 2, 3, 'a' , 'b', 'c']) In [7]: i[[0,1,2]] Out[7]: Index([1, 2, 3], dtype='object') In [8]: i.drop(['a', 'b', 'c']) Out[8]: Index([1, 2, 3], dtype='object')

Previously, the above operation would return Int64Index. If you’d like to do this manually, use Index.astype()

In [9]: i[[0,1,2]].astype(np.int_) Out[9]: Int64Index([1, 2, 3], dtype='int32')

set_index no longer converts MultiIndexes to an Index of tuples. For example, the old behavior returned an Index in this case (GH6459):

# Old behavior, casted MultiIndex to an Index In [10]: tuple_ind Out[10]: Index([(u'a', u'c'), (u'a', u'd'), (u'b', u'c'), (u'b', u'd')], dtype='object') In [11]: df_multi.set_index(tuple_ind) Out[11]: 0 1 (a, c) 0.471435 -1.190976 (a, d) 1.432707 -0.312652 (b, c) -0.720589 0.887163 (b, d) 0.859588 -0.636524 # New behavior In [12]: mi Out[12]: MultiIndex(levels=[[u'a', u'b'], [u'c', u'd']], labels=[[0, 0, 1, 1], [0, 1, 0, 1]]) In [13]: df_multi.set_index(mi) Out[13]: 0 1 a c 0.471435 -1.190976 d 1.432707 -0.312652 b c -0.720589 0.887163 d 0.859588 -0.636524

This also applies when passing multiple indices to set_index:

# Old output, 2-level MultiIndex of tuples In [14]: df_multi.set_index([df_multi.index, df_multi.index]) Out[14]: 0 1 (a, c) (a, c) 0.471435 -1.190976 (a, d) (a, d) 1.432707 -0.312652 (b, c) (b, c) -0.720589 0.887163 (b, d) (b, d) 0.859588 -0.636524 # New output, 4-level MultiIndex In [15]: df_multi.set_index([df_multi.index, df_multi.index]) Out[15]: 0 1 a c a c 0.471435 -1.190976 d a d 1.432707 -0.312652 b c b c -0.720589 0.887163 d b d 0.859588 -0.636524

pairwise keyword was added to the statistical moment functions rolling_cov, rolling_corr, ewmcov, ewmcorr, expanding_cov, expanding_corr to allow the calculation of moving window covariance and correlation matrices (GH4950). See Computing rolling pairwise covariances and correlations in the docs.

In [16]: df = DataFrame(np.random.randn(10,4),columns=list('ABCD')) In [17]: covs = rolling_cov(df[['A','B','C']], df[['B','C','D']], 5, pairwise=True) In [18]: covs[df.index[-1]] Out[18]: B C D A 0.128104 0.183628 -0.047358 B 0.856265 0.058945 0.145447 C 0.058945 0.335350 0.390637

Series.iteritems() is now lazy (returns an iterator rather than a list). This was the documented behavior prior to 0.14. (GH6760)

Added nunique and value_counts functions to Index for counting unique elements. (GH6734)

stack and unstack now raise a ValueError when the level keyword refers to a non-unique item in the Index (previously raised a KeyError). (GH6738)

drop unused order argument from Series.sort; args now are in the same order as Series.order; add na_position arg to conform to Series.order (GH6847)

default sorting algorithm for Series.order is now quicksort, to conform with Series.sort (and numpy defaults)

add inplace keyword to Series.order/sort to make them inverses (GH6859)

DataFrame.sort now places NaNs at the beginning or end of the sort according to the na_position parameter. (GH3917)

accept TextFileReader in concat, which was affecting a common user idiom (GH6583), this was a regression from 0.13.1

Added factorize functions to Index and Series to get indexer and unique values (GH7090)

describe on a DataFrame with a mix of Timestamp and string like objects returns a different Index (GH7088). Previously the index was unintentionally sorted.

Arithmetic operations with only bool dtypes now give a warning indicating that they are evaluated in Python space for +, -, and * operations and raise for all others (GH7011, GH6762, GH7015, GH7210)

x = pd.Series(np.random.rand(10) > 0.5) y = True x + y # warning generated: should do x | y instead x / y # this raises because it doesn't make sense NotImplementedError: operator '/' not implemented for bool dtypes

In HDFStore, select_as_multiple will always raise a KeyError, when a key or the selector is not found (GH6177)

df['col'] = value and df.loc[:,'col'] = value are now completely equivalent; previously the .loc would not necessarily coerce the dtype of the resultant series (GH6149)

dtypes and ftypes now return a series with dtype=object on empty containers (GH5740)

df.to_csv will now return a string of the CSV data if neither a target path nor a buffer is provided (GH6061)

pd.infer_freq() will now raise a TypeError if given an invalid Series/Index type (GH6407, GH6463)

A tuple passed to DataFame.sort_index will be interpreted as the levels of the index, rather than requiring a list of tuple (GH4370)

all offset operations now return Timestamp types (rather than datetime), Business/Week frequencies were incorrect (GH4069)

to_excel now converts np.inf into a string representation, customizable by the inf_rep keyword argument (Excel has no native inf representation) (GH6782)

Replace pandas.compat.scipy.scoreatpercentile with numpy.percentile (GH6810)

.quantile on a datetime[ns] series now returns Timestamp instead of np.datetime64 objects (GH6810)

change AssertionError to TypeError for invalid types passed to concat (GH6583)

Raise a TypeError when DataFrame is passed an iterator as the data argument (GH5357)

Display Changes¶

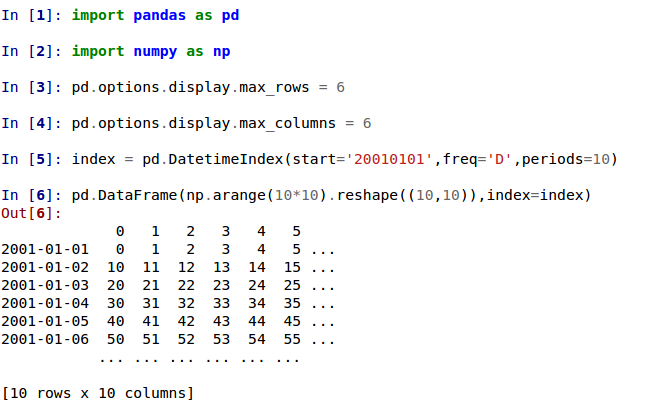

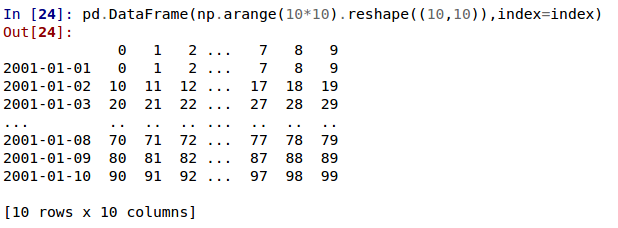

The default way of printing large DataFrames has changed. DataFrames exceeding max_rows and/or max_columns are now displayed in a centrally truncated view, consistent with the printing of a pandas.Series (GH5603).

In previous versions, a DataFrame was truncated once the dimension constraints were reached and an ellipse (...) signaled that part of the data was cut off.

In the current version, large DataFrames are centrally truncated, showing a preview of head and tail in both dimensions.

allow option 'truncate' for display.show_dimensions to only show the dimensions if the frame is truncated (GH6547).

The default for display.show_dimensions will now be truncate. This is consistent with how Series display length.

In [19]: dfd = pd.DataFrame(np.arange(25).reshape(-1,5), index=[0,1,2,3,4], columns=[0,1,2,3,4]) # show dimensions since this is truncated In [20]: with pd.option_context('display.max_rows', 2, 'display.max_columns', 2, ....: 'display.show_dimensions', 'truncate'): ....: print(dfd) ....: 0 ... 4 0 0 ... 4 .. .. ... .. 4 20 ... 24 [5 rows x 5 columns] # will not show dimensions since it is not truncated In [21]: with pd.option_context('display.max_rows', 10, 'display.max_columns', 40, ....: 'display.show_dimensions', 'truncate'): ....: print(dfd) ....: 0 1 2 3 4 0 0 1 2 3 4 1 5 6 7 8 9 2 10 11 12 13 14 3 15 16 17 18 19 4 20 21 22 23 24

Regression in the display of a MultiIndexed Series with display.max_rows is less than the length of the series (GH7101)

Fixed a bug in the HTML repr of a truncated Series or DataFrame not showing the class name with the large_repr set to ‘info’ (GH7105)

The verbose keyword in DataFrame.info(), which controls whether to shorten the info representation, is now None by default. This will follow the global setting in display.max_info_columns. The global setting can be overriden with verbose=True or verbose=False.

Fixed a bug with the info repr not honoring the display.max_info_columns setting (GH6939)

Offset/freq info now in Timestamp __repr__ (GH4553)

Text Parsing API Changes¶

read_csv()/read_table() will now be noiser w.r.t invalid options rather than falling back to the PythonParser.

- Raise ValueError when sep specified with delim_whitespace=True in read_csv()/read_table() (GH6607)

- Raise ValueError when engine='c' specified with unsupported options in read_csv()/read_table() (GH6607)

- Raise ValueError when fallback to python parser causes options to be ignored (GH6607)

- Produce ParserWarning on fallback to python parser when no options are ignored (GH6607)

- Translate sep='\s+' to delim_whitespace=True in read_csv()/read_table() if no other C-unsupported options specified (GH6607)

Groupby API Changes¶

More consistent behaviour for some groupby methods:

groupby head and tail now act more like filter rather than an aggregation:

In [22]: df = pd.DataFrame([[1, 2], [1, 4], [5, 6]], columns=['A', 'B']) In [23]: g = df.groupby('A') In [24]: g.head(1) # filters DataFrame Out[24]: A B 0 1 2 2 5 6 In [25]: g.apply(lambda x: x.head(1)) # used to simply fall-through Out[25]: A B A 1 0 1 2 5 2 5 6

groupby head and tail respect column selection:

In [26]: g[['B']].head(1) Out[26]: B 0 2 2 6

groupby nth now reduces by default; filtering can be achieved by passing as_index=False. With an optional dropna argument to ignore NaN. See the docs.

Reducing

In [27]: df = DataFrame([[1, np.nan], [1, 4], [5, 6]], columns=['A', 'B']) In [28]: g = df.groupby('A') In [29]: g.nth(0) Out[29]: B A 1 NaN 5 6 # this is equivalent to g.first() In [30]: g.nth(0, dropna='any') Out[30]: B A 1 4 5 6 # this is equivalent to g.last() In [31]: g.nth(-1, dropna='any') Out[31]: B A 1 4 5 6

Filtering

In [32]: gf = df.groupby('A',as_index=False) In [33]: gf.nth(0) Out[33]: A B 0 1 NaN 2 5 6 In [34]: gf.nth(0, dropna='any') Out[34]: A B 0 1 4 1 5 6

groupby will now not return the grouped column for non-cython functions (GH5610, GH5614, GH6732), as its already the index

In [35]: df = DataFrame([[1, np.nan], [1, 4], [5, 6], [5, 8]], columns=['A', 'B']) In [36]: g = df.groupby('A') In [37]: g.count() Out[37]: B A 1 1 5 2 In [38]: g.describe() Out[38]: B A 1 count 1.000000 mean 4.000000 std NaN min 4.000000 25% 4.000000 50% 4.000000 75% 4.000000 ... ... 5 mean 7.000000 std 1.414214 min 6.000000 25% 6.500000 50% 7.000000 75% 7.500000 max 8.000000 [16 rows x 1 columns]

passing as_index will leave the grouped column in-place (this is not change in 0.14.0)

In [39]: df = DataFrame([[1, np.nan], [1, 4], [5, 6], [5, 8]], columns=['A', 'B']) In [40]: g = df.groupby('A',as_index=False) In [41]: g.count() Out[41]: A B 0 1 1 1 5 2 In [42]: g.describe() Out[42]: A B 0 count 2 1.000000 mean 1 4.000000 std 0 NaN min 1 4.000000 25% 1 4.000000 50% 1 4.000000 75% 1 4.000000 ... .. ... 1 mean 5 7.000000 std 0 1.414214 min 5 6.000000 25% 5 6.500000 50% 5 7.000000 75% 5 7.500000 max 5 8.000000 [16 rows x 2 columns]

Allow specification of a more complex groupby via pd.Grouper, such as grouping by a Time and a string field simultaneously. See the docs. (GH3794)

Better propagation/preservation of Series names when performing groupby operations:

- SeriesGroupBy.agg will ensure that the name attribute of the original series is propagated to the result (GH6265).

- If the function provided to GroupBy.apply returns a named series, the name of the series will be kept as the name of the column index of the DataFrame returned by GroupBy.apply (GH6124). This facilitates DataFrame.stack operations where the name of the column index is used as the name of the inserted column containing the pivoted data.

SQL¶

The SQL reading and writing functions now support more database flavors through SQLAlchemy (GH2717, GH4163, GH5950, GH6292). All databases supported by SQLAlchemy can be used, such as PostgreSQL, MySQL, Oracle, Microsoft SQL server (see documentation of SQLAlchemy on included dialects).

The functionality of providing DBAPI connection objects will only be supported for sqlite3 in the future. The 'mysql' flavor is deprecated.

The new functions read_sql_query() and read_sql_table() are introduced. The function read_sql() is kept as a convenience wrapper around the other two and will delegate to specific function depending on the provided input (database table name or sql query).

In practice, you have to provide a SQLAlchemy engine to the sql functions. To connect with SQLAlchemy you use the create_engine() function to create an engine object from database URI. You only need to create the engine once per database you are connecting to. For an in-memory sqlite database:

In [43]: from sqlalchemy import create_engine

# Create your connection.

In [44]: engine = create_engine('sqlite:///:memory:')

This engine can then be used to write or read data to/from this database:

In [45]: df = pd.DataFrame({'A': [1,2,3], 'B': ['a', 'b', 'c']})

In [46]: df.to_sql('db_table', engine, index=False)

You can read data from a database by specifying the table name:

In [47]: pd.read_sql_table('db_table', engine)

Out[47]:

A B

0 1 a

1 2 b

2 3 c

or by specifying a sql query:

In [48]: pd.read_sql_query('SELECT * FROM db_table', engine)

Out[48]:

A B

0 1 a

1 2 b

2 3 c

Some other enhancements to the sql functions include:

- support for writing the index. This can be controlled with the index keyword (default is True).

- specify the column label to use when writing the index with index_label.

- specify string columns to parse as datetimes withh the parse_dates keyword in read_sql_query() and read_sql_table().

Warning

Some of the existing functions or function aliases have been deprecated and will be removed in future versions. This includes: tquery, uquery, read_frame, frame_query, write_frame.

Warning

The support for the ‘mysql’ flavor when using DBAPI connection objects has been deprecated. MySQL will be further supported with SQLAlchemy engines (GH6900).

MultiIndexing Using Slicers¶

In 0.14.0 we added a new way to slice multi-indexed objects. You can slice a multi-index by providing multiple indexers.

You can provide any of the selectors as if you are indexing by label, see Selection by Label, including slices, lists of labels, labels, and boolean indexers.

You can use slice(None) to select all the contents of that level. You do not need to specify all the deeper levels, they will be implied as slice(None).

As usual, both sides of the slicers are included as this is label indexing.

See the docs See also issues (GH6134, GH4036, GH3057, GH2598, GH5641, GH7106)

Warning

You should specify all axes in the .loc specifier, meaning the indexer for the index and for the columns. Their are some ambiguous cases where the passed indexer could be mis-interpreted as indexing both axes, rather than into say the MuliIndex for the rows.

You should do this:

df.loc[(slice('A1','A3'),.....),:]

rather than this:

df.loc[(slice('A1','A3'),.....)]

Warning

You will need to make sure that the selection axes are fully lexsorted!

In [49]: def mklbl(prefix,n):

....: return ["%s%s" % (prefix,i) for i in range(n)]

....:

In [50]: index = MultiIndex.from_product([mklbl('A',4),

....: mklbl('B',2),

....: mklbl('C',4),

....: mklbl('D',2)])

....:

In [51]: columns = MultiIndex.from_tuples([('a','foo'),('a','bar'),

....: ('b','foo'),('b','bah')],

....: names=['lvl0', 'lvl1'])

....:

In [52]: df = DataFrame(np.arange(len(index)*len(columns)).reshape((len(index),len(columns))),

....: index=index,

....: columns=columns).sortlevel().sortlevel(axis=1)

....:

In [53]: df

Out[53]:

lvl0 a b

lvl1 bar foo bah foo

A0 B0 C0 D0 1 0 3 2

D1 5 4 7 6

C1 D0 9 8 11 10

D1 13 12 15 14

C2 D0 17 16 19 18

D1 21 20 23 22

C3 D0 25 24 27 26

... ... ... ... ...

A3 B1 C0 D1 229 228 231 230

C1 D0 233 232 235 234

D1 237 236 239 238

C2 D0 241 240 243 242

D1 245 244 247 246

C3 D0 249 248 251 250

D1 253 252 255 254

[64 rows x 4 columns]

Basic multi-index slicing using slices, lists, and labels.

In [54]: df.loc[(slice('A1','A3'),slice(None), ['C1','C3']),:]

Out[54]:

lvl0 a b

lvl1 bar foo bah foo

A1 B0 C1 D0 73 72 75 74

D1 77 76 79 78

C3 D0 89 88 91 90

D1 93 92 95 94

B1 C1 D0 105 104 107 106

D1 109 108 111 110

C3 D0 121 120 123 122

... ... ... ... ...

A3 B0 C1 D1 205 204 207 206

C3 D0 217 216 219 218

D1 221 220 223 222

B1 C1 D0 233 232 235 234

D1 237 236 239 238

C3 D0 249 248 251 250

D1 253 252 255 254

[24 rows x 4 columns]

You can use a pd.IndexSlice to shortcut the creation of these slices

In [55]: idx = pd.IndexSlice

In [56]: df.loc[idx[:,:,['C1','C3']],idx[:,'foo']]

Out[56]:

lvl0 a b

lvl1 foo foo

A0 B0 C1 D0 8 10

D1 12 14

C3 D0 24 26

D1 28 30

B1 C1 D0 40 42

D1 44 46

C3 D0 56 58

... ... ...

A3 B0 C1 D1 204 206

C3 D0 216 218

D1 220 222

B1 C1 D0 232 234

D1 236 238

C3 D0 248 250

D1 252 254

[32 rows x 2 columns]

It is possible to perform quite complicated selections using this method on multiple axes at the same time.

In [57]: df.loc['A1',(slice(None),'foo')]

Out[57]:

lvl0 a b

lvl1 foo foo

B0 C0 D0 64 66

D1 68 70

C1 D0 72 74

D1 76 78

C2 D0 80 82

D1 84 86

C3 D0 88 90

... ... ...

B1 C0 D1 100 102

C1 D0 104 106

D1 108 110

C2 D0 112 114

D1 116 118

C3 D0 120 122

D1 124 126

[16 rows x 2 columns]

In [58]: df.loc[idx[:,:,['C1','C3']],idx[:,'foo']]

Out[58]:

lvl0 a b

lvl1 foo foo

A0 B0 C1 D0 8 10

D1 12 14

C3 D0 24 26

D1 28 30

B1 C1 D0 40 42

D1 44 46

C3 D0 56 58

... ... ...

A3 B0 C1 D1 204 206

C3 D0 216 218

D1 220 222

B1 C1 D0 232 234

D1 236 238

C3 D0 248 250

D1 252 254

[32 rows x 2 columns]

Using a boolean indexer you can provide selection related to the values.

In [59]: mask = df[('a','foo')]>200

In [60]: df.loc[idx[mask,:,['C1','C3']],idx[:,'foo']]

Out[60]:

lvl0 a b

lvl1 foo foo

A3 B0 C1 D1 204 206

C3 D0 216 218

D1 220 222

B1 C1 D0 232 234

D1 236 238

C3 D0 248 250

D1 252 254

You can also specify the axis argument to .loc to interpret the passed slicers on a single axis.

In [61]: df.loc(axis=0)[:,:,['C1','C3']]

Out[61]:

lvl0 a b

lvl1 bar foo bah foo

A0 B0 C1 D0 9 8 11 10

D1 13 12 15 14

C3 D0 25 24 27 26

D1 29 28 31 30

B1 C1 D0 41 40 43 42

D1 45 44 47 46

C3 D0 57 56 59 58

... ... ... ... ...

A3 B0 C1 D1 205 204 207 206

C3 D0 217 216 219 218

D1 221 220 223 222

B1 C1 D0 233 232 235 234

D1 237 236 239 238

C3 D0 249 248 251 250

D1 253 252 255 254

[32 rows x 4 columns]

Furthermore you can set the values using these methods

In [62]: df2 = df.copy()

In [63]: df2.loc(axis=0)[:,:,['C1','C3']] = -10

In [64]: df2

Out[64]:

lvl0 a b

lvl1 bar foo bah foo

A0 B0 C0 D0 1 0 3 2

D1 5 4 7 6

C1 D0 -10 -10 -10 -10

D1 -10 -10 -10 -10

C2 D0 17 16 19 18

D1 21 20 23 22

C3 D0 -10 -10 -10 -10

... ... ... ... ...

A3 B1 C0 D1 229 228 231 230

C1 D0 -10 -10 -10 -10

D1 -10 -10 -10 -10

C2 D0 241 240 243 242

D1 245 244 247 246

C3 D0 -10 -10 -10 -10

D1 -10 -10 -10 -10

[64 rows x 4 columns]

You can use a right-hand-side of an alignable object as well.

In [65]: df2 = df.copy()

In [66]: df2.loc[idx[:,:,['C1','C3']],:] = df2*1000

In [67]: df2

Out[67]:

lvl0 a b

lvl1 bar foo bah foo

A0 B0 C0 D0 1 0 3 2

D1 5 4 7 6

C1 D0 1000 0 3000 2000

D1 5000 4000 7000 6000

C2 D0 17 16 19 18

D1 21 20 23 22

C3 D0 9000 8000 11000 10000

... ... ... ... ...

A3 B1 C0 D1 229 228 231 230

C1 D0 113000 112000 115000 114000

D1 117000 116000 119000 118000

C2 D0 241 240 243 242

D1 245 244 247 246

C3 D0 121000 120000 123000 122000

D1 125000 124000 127000 126000

[64 rows x 4 columns]

Plotting¶

Hexagonal bin plots from DataFrame.plot with kind='hexbin' (GH5478), See the docs.

DataFrame.plot and Series.plot now supports area plot with specifying kind='area' (GH6656), See the docs

Pie plots from Series.plot and DataFrame.plot with kind='pie' (GH6976), See the docs.

Plotting with Error Bars is now supported in the .plot method of DataFrame and Series objects (GH3796, GH6834), See the docs.

DataFrame.plot and Series.plot now support a table keyword for plotting matplotlib.Table, See the docs. The table keyword can receive the following values.

- False: Do nothing (default).

- True: Draw a table using the DataFrame or Series called plot method. Data will be transposed to meet matplotlib’s default layout.

- DataFrame or Series: Draw matplotlib.table using the passed data. The data will be drawn as displayed in print method (not transposed automatically). Also, helper function pandas.tools.plotting.table is added to create a table from DataFrame and Series, and add it to an matplotlib.Axes.

plot(legend='reverse') will now reverse the order of legend labels for most plot kinds. (GH6014)

Line plot and area plot can be stacked by stacked=True (GH6656)

Following keywords are now acceptable for DataFrame.plot() with kind='bar' and kind='barh':

- width: Specify the bar width. In previous versions, static value 0.5 was passed to matplotlib and it cannot be overwritten. (GH6604)

- align: Specify the bar alignment. Default is center (different from matplotlib). In previous versions, pandas passes align=’edge’ to matplotlib and adjust the location to center by itself, and it results align keyword is not applied as expected. (GH4525)

- position: Specify relative alignments for bar plot layout. From 0 (left/bottom-end) to 1(right/top-end). Default is 0.5 (center). (GH6604)

Because of the default align value changes, coordinates of bar plots are now located on integer values (0.0, 1.0, 2.0 ...). This is intended to make bar plot be located on the same coodinates as line plot. However, bar plot may differs unexpectedly when you manually adjust the bar location or drawing area, such as using set_xlim, set_ylim, etc. In this cases, please modify your script to meet with new coordinates.

The parallel_coordinates() function now takes argument color instead of colors. A FutureWarning is raised to alert that the old colors argument will not be supported in a future release. (GH6956)

The parallel_coordinates() and andrews_curves() functions now take positional argument frame instead of data. A FutureWarning is raised if the old data argument is used by name. (GH6956)

DataFrame.boxplot() now supports layout keyword (GH6769)

DataFrame.boxplot() has a new keyword argument, return_type. It accepts 'dict', 'axes', or 'both', in which case a namedtuple with the matplotlib axes and a dict of matplotlib Lines is returned.

Prior Version Deprecations/Changes¶

There are prior version deprecations that are taking effect as of 0.14.0.

- Remove DateRange in favor of DatetimeIndex (GH6816)

- Remove column keyword from DataFrame.sort (GH4370)

- Remove precision keyword from set_eng_float_format() (GH395)

- Remove force_unicode keyword from DataFrame.to_string(), DataFrame.to_latex(), and DataFrame.to_html(); these function encode in unicode by default (GH2224, GH2225)

- Remove nanRep keyword from DataFrame.to_csv() and DataFrame.to_string() (GH275)

- Remove unique keyword from HDFStore.select_column() (GH3256)

- Remove inferTimeRule keyword from Timestamp.offset() (GH391)

- Remove name keyword from get_data_yahoo() and get_data_google() ( commit b921d1a )

- Remove offset keyword from DatetimeIndex constructor ( commit 3136390 )

- Remove time_rule from several rolling-moment statistical functions, such as rolling_sum() (GH1042)

- Removed neg - boolean operations on numpy arrays in favor of inv ~, as this is going to be deprecated in numpy 1.9 (GH6960)

Deprecations¶

The pivot_table()/DataFrame.pivot_table() and crosstab() functions now take arguments index and columns instead of rows and cols. A FutureWarning is raised to alert that the old rows and cols arguments will not be supported in a future release (GH5505)

The DataFrame.drop_duplicates() and DataFrame.duplicated() methods now take argument subset instead of cols to better align with DataFrame.dropna(). A FutureWarning is raised to alert that the old cols arguments will not be supported in a future release (GH6680)

The DataFrame.to_csv() and DataFrame.to_excel() functions now takes argument columns instead of cols. A FutureWarning is raised to alert that the old cols arguments will not be supported in a future release (GH6645)

Indexers will warn FutureWarning when used with a scalar indexer and a non-floating point Index (GH4892, GH6960)

# non-floating point indexes can only be indexed by integers / labels In [1]: Series(1,np.arange(5))[3.0] pandas/core/index.py:469: FutureWarning: scalar indexers for index type Int64Index should be integers and not floating point Out[1]: 1 In [2]: Series(1,np.arange(5)).iloc[3.0] pandas/core/index.py:469: FutureWarning: scalar indexers for index type Int64Index should be integers and not floating point Out[2]: 1 In [3]: Series(1,np.arange(5)).iloc[3.0:4] pandas/core/index.py:527: FutureWarning: slice indexers when using iloc should be integers and not floating point Out[3]: 3 1 dtype: int64 # these are Float64Indexes, so integer or floating point is acceptable In [4]: Series(1,np.arange(5.))[3] Out[4]: 1 In [5]: Series(1,np.arange(5.))[3.0] Out[6]: 1

Numpy 1.9 compat w.r.t. deprecation warnings (GH6960)

Panel.shift() now has a function signature that matches DataFrame.shift(). The old positional argument lags has been changed to a keyword argument periods with a default value of 1. A FutureWarning is raised if the old argument lags is used by name. (GH6910)

The order keyword argument of factorize() will be removed. (GH6926).

Remove the copy keyword from DataFrame.xs(), Panel.major_xs(), Panel.minor_xs(). A view will be returned if possible, otherwise a copy will be made. Previously the user could think that copy=False would ALWAYS return a view. (GH6894)

The parallel_coordinates() function now takes argument color instead of colors. A FutureWarning is raised to alert that the old colors argument will not be supported in a future release. (GH6956)

The parallel_coordinates() and andrews_curves() functions now take positional argument frame instead of data. A FutureWarning is raised if the old data argument is used by name. (GH6956)

The support for the ‘mysql’ flavor when using DBAPI connection objects has been deprecated. MySQL will be further supported with SQLAlchemy engines (GH6900).

The following io.sql functions have been deprecated: tquery, uquery, read_frame, frame_query, write_frame.

The percentile_width keyword argument in describe() has been deprecated. Use the percentiles keyword instead, which takes a list of percentiles to display. The default output is unchanged.

The default return type of boxplot() will change from a dict to a matpltolib Axes in a future release. You can use the future behavior now by passing return_type='axes' to boxplot.

Enhancements¶

DataFrame and Series will create a MultiIndex object if passed a tuples dict, See the docs (GH3323)

In [68]: Series({('a', 'b'): 1, ('a', 'a'): 0, ....: ('a', 'c'): 2, ('b', 'a'): 3, ('b', 'b'): 4}) ....: Out[68]: a a 0 b 1 c 2 b a 3 b 4 dtype: int64 In [69]: DataFrame({('a', 'b'): {('A', 'B'): 1, ('A', 'C'): 2}, ....: ('a', 'a'): {('A', 'C'): 3, ('A', 'B'): 4}, ....: ('a', 'c'): {('A', 'B'): 5, ('A', 'C'): 6}, ....: ('b', 'a'): {('A', 'C'): 7, ('A', 'B'): 8}, ....: ('b', 'b'): {('A', 'D'): 9, ('A', 'B'): 10}}) ....: Out[69]: a b a b c a b A B 4 1 5 8 10 C 3 2 6 7 NaN D NaN NaN NaN NaN 9

Added the sym_diff method to Index (GH5543)

DataFrame.to_latex now takes a longtable keyword, which if True will return a table in a longtable environment. (GH6617)

Add option to turn off escaping in DataFrame.to_latex (GH6472)

pd.read_clipboard will, if the keyword sep is unspecified, try to detect data copied from a spreadsheet and parse accordingly. (GH6223)

Joining a singly-indexed DataFrame with a multi-indexed DataFrame (GH3662)

See the docs. Joining multi-index DataFrames on both the left and right is not yet supported ATM.

In [70]: household = DataFrame(dict(household_id = [1,2,3], ....: male = [0,1,0], ....: wealth = [196087.3,316478.7,294750]), ....: columns = ['household_id','male','wealth'] ....: ).set_index('household_id') ....: In [71]: household Out[71]: male wealth household_id 1 0 196087.3 2 1 316478.7 3 0 294750.0 In [72]: portfolio = DataFrame(dict(household_id = [1,2,2,3,3,3,4], ....: asset_id = ["nl0000301109","nl0000289783","gb00b03mlx29", ....: "gb00b03mlx29","lu0197800237","nl0000289965",np.nan], ....: name = ["ABN Amro","Robeco","Royal Dutch Shell","Royal Dutch Shell", ....: "AAB Eastern Europe Equity Fund","Postbank BioTech Fonds",np.nan], ....: share = [1.0,0.4,0.6,0.15,0.6,0.25,1.0]), ....: columns = ['household_id','asset_id','name','share'] ....: ).set_index(['household_id','asset_id']) ....: In [73]: portfolio Out[73]: name share household_id asset_id 1 nl0000301109 ABN Amro 1.00 2 nl0000289783 Robeco 0.40 gb00b03mlx29 Royal Dutch Shell 0.60 3 gb00b03mlx29 Royal Dutch Shell 0.15 lu0197800237 AAB Eastern Europe Equity Fund 0.60 nl0000289965 Postbank BioTech Fonds 0.25 4 NaN NaN 1.00 In [74]: household.join(portfolio, how='inner') Out[74]: male wealth name \ household_id asset_id 1 nl0000301109 0 196087.3 ABN Amro 2 nl0000289783 1 316478.7 Robeco gb00b03mlx29 1 316478.7 Royal Dutch Shell 3 gb00b03mlx29 0 294750.0 Royal Dutch Shell lu0197800237 0 294750.0 AAB Eastern Europe Equity Fund nl0000289965 0 294750.0 Postbank BioTech Fonds share household_id asset_id 1 nl0000301109 1.00 2 nl0000289783 0.40 gb00b03mlx29 0.60 3 gb00b03mlx29 0.15 lu0197800237 0.60 nl0000289965 0.25

quotechar, doublequote, and escapechar can now be specified when using DataFrame.to_csv (GH5414, GH4528)

Partially sort by only the specified levels of a MultiIndex with the sort_remaining boolean kwarg. (GH3984)

Added to_julian_date to TimeStamp and DatetimeIndex. The Julian Date is used primarily in astronomy and represents the number of days from noon, January 1, 4713 BC. Because nanoseconds are used to define the time in pandas the actual range of dates that you can use is 1678 AD to 2262 AD. (GH4041)

DataFrame.to_stata will now check data for compatibility with Stata data types and will upcast when needed. When it is not possible to losslessly upcast, a warning is issued (GH6327)

DataFrame.to_stata and StataWriter will accept keyword arguments time_stamp and data_label which allow the time stamp and dataset label to be set when creating a file. (GH6545)

pandas.io.gbq now handles reading unicode strings properly. (GH5940)

Holidays Calendars are now available and can be used with the CustomBusinessDay offset (GH6719)

Float64Index is now backed by a float64 dtype ndarray instead of an object dtype array (GH6471).

Implemented Panel.pct_change (GH6904)

Added how option to rolling-moment functions to dictate how to handle resampling; rolling_max() defaults to max, rolling_min() defaults to min, and all others default to mean (GH6297)

CustomBuisnessMonthBegin and CustomBusinessMonthEnd are now available (GH6866)

Series.quantile() and DataFrame.quantile() now accept an array of quantiles.

describe() now accepts an array of percentiles to include in the summary statistics (GH4196)

pivot_table can now accept Grouper by index and columns keywords (GH6913)

In [75]: import datetime In [76]: df = DataFrame({ ....: 'Branch' : 'A A A A A B'.split(), ....: 'Buyer': 'Carl Mark Carl Carl Joe Joe'.split(), ....: 'Quantity': [1, 3, 5, 1, 8, 1], ....: 'Date' : [datetime.datetime(2013,11,1,13,0), datetime.datetime(2013,9,1,13,5), ....: datetime.datetime(2013,10,1,20,0), datetime.datetime(2013,10,2,10,0), ....: datetime.datetime(2013,11,1,20,0), datetime.datetime(2013,10,2,10,0)], ....: 'PayDay' : [datetime.datetime(2013,10,4,0,0), datetime.datetime(2013,10,15,13,5), ....: datetime.datetime(2013,9,5,20,0), datetime.datetime(2013,11,2,10,0), ....: datetime.datetime(2013,10,7,20,0), datetime.datetime(2013,9,5,10,0)]}) ....: In [77]: df Out[77]: Branch Buyer Date PayDay Quantity 0 A Carl 2013-11-01 13:00:00 2013-10-04 00:00:00 1 1 A Mark 2013-09-01 13:05:00 2013-10-15 13:05:00 3 2 A Carl 2013-10-01 20:00:00 2013-09-05 20:00:00 5 3 A Carl 2013-10-02 10:00:00 2013-11-02 10:00:00 1 4 A Joe 2013-11-01 20:00:00 2013-10-07 20:00:00 8 5 B Joe 2013-10-02 10:00:00 2013-09-05 10:00:00 1 In [78]: pivot_table(df, index=Grouper(freq='M', key='Date'), ....: columns=Grouper(freq='M', key='PayDay'), ....: values='Quantity', aggfunc=np.sum) ....: Out[78]: PayDay 2013-09-30 2013-10-31 2013-11-30 Date 2013-09-30 NaN 3 NaN 2013-10-31 6 NaN 1 2013-11-30 NaN 9 NaN

Arrays of strings can be wrapped to a specified width (str.wrap) (GH6999)

Add nsmallest() and Series.nlargest() methods to Series, See the docs (GH3960)

PeriodIndex fully supports partial string indexing like DatetimeIndex (GH7043)

In [79]: prng = period_range('2013-01-01 09:00', periods=100, freq='H') In [80]: ps = Series(np.random.randn(len(prng)), index=prng) In [81]: ps Out[81]: 2013-01-01 09:00 0.755414 2013-01-01 10:00 0.215269 2013-01-01 11:00 0.841009 2013-01-01 12:00 -1.445810 2013-01-01 13:00 -1.401973 ... 2013-01-05 07:00 0.702562 2013-01-05 08:00 -0.850346 2013-01-05 09:00 1.176812 2013-01-05 10:00 -0.524336 2013-01-05 11:00 0.700908 2013-01-05 12:00 0.984188 Freq: H, Length: 100 In [82]: ps['2013-01-02'] Out[82]: 2013-01-02 00:00 -0.208499 2013-01-02 01:00 1.033801 2013-01-02 02:00 -2.400454 2013-01-02 03:00 2.030604 2013-01-02 04:00 -1.142631 ... 2013-01-02 18:00 -3.563517 2013-01-02 19:00 1.321106 2013-01-02 20:00 0.152631 2013-01-02 21:00 0.164530 2013-01-02 22:00 -0.430096 2013-01-02 23:00 0.767369 Freq: H, Length: 24

read_excel can now read milliseconds in Excel dates and times with xlrd >= 0.9.3. (GH5945)

pd.stats.moments.rolling_var now uses Welford’s method for increased numerical stability (GH6817)

pd.expanding_apply and pd.rolling_apply now take args and kwargs that are passed on to the func (GH6289)

DataFrame.rank() now has a percentage rank option (GH5971)

Series.rank() now has a percentage rank option (GH5971)

Series.rank() and DataFrame.rank() now accept method='dense' for ranks without gaps (GH6514)

Support passing encoding with xlwt (GH3710)

Refactor Block classes removing Block.items attributes to avoid duplication in item handling (GH6745, GH6988).

Testing statements updated to use specialized asserts (GH6175)

Performance¶

- Performance improvement when converting DatetimeIndex to floating ordinals using DatetimeConverter (GH6636)

- Performance improvement for DataFrame.shift (GH5609)

- Performance improvement in indexing into a multi-indexed Series (GH5567)

- Performance improvements in single-dtyped indexing (GH6484)

- Improve performance of DataFrame construction with certain offsets, by removing faulty caching (e.g. MonthEnd,BusinessMonthEnd), (GH6479)

- Improve performance of CustomBusinessDay (GH6584)

- improve performance of slice indexing on Series with string keys (GH6341, GH6372)

- Performance improvement for DataFrame.from_records when reading a specified number of rows from an iterable (GH6700)

- Performance improvements in timedelta conversions for integer dtypes (GH6754)

- Improved performance of compatible pickles (GH6899)

- Improve performance in certain reindexing operations by optimizing take_2d (GH6749)

- GroupBy.count() is now implemented in Cython and is much faster for large numbers of groups (GH7016).

Experimental¶

There are no experimental changes in 0.14.0

Bug Fixes¶

- Bug in Series ValueError when index doesn’t match data (GH6532)

- Prevent segfault due to MultiIndex not being supported in HDFStore table format (GH1848)

- Bug in pd.DataFrame.sort_index where mergesort wasn’t stable when ascending=False (GH6399)

- Bug in pd.tseries.frequencies.to_offset when argument has leading zeroes (GH6391)

- Bug in version string gen. for dev versions with shallow clones / install from tarball (GH6127)

- Inconsistent tz parsing Timestamp / to_datetime for current year (GH5958)

- Indexing bugs with reordered indexes (GH6252, GH6254)

- Bug in .xs with a Series multiindex (GH6258, GH5684)

- Bug in conversion of a string types to a DatetimeIndex with a specified frequency (GH6273, GH6274)

- Bug in eval where type-promotion failed for large expressions (GH6205)

- Bug in interpolate with inplace=True (GH6281)

- HDFStore.remove now handles start and stop (GH6177)

- HDFStore.select_as_multiple handles start and stop the same way as select (GH6177)

- HDFStore.select_as_coordinates and select_column works with a where clause that results in filters (GH6177)

- Regression in join of non_unique_indexes (GH6329)

- Issue with groupby agg with a single function and a a mixed-type frame (GH6337)

- Bug in DataFrame.replace() when passing a non- bool to_replace argument (GH6332)

- Raise when trying to align on different levels of a multi-index assignment (GH3738)

- Bug in setting complex dtypes via boolean indexing (GH6345)

- Bug in TimeGrouper/resample when presented with a non-monotonic DatetimeIndex that would return invalid results. (GH4161)

- Bug in index name propogation in TimeGrouper/resample (GH4161)

- TimeGrouper has a more compatible API to the rest of the groupers (e.g. groups was missing) (GH3881)

- Bug in multiple grouping with a TimeGrouper depending on target column order (GH6764)

- Bug in pd.eval when parsing strings with possible tokens like '&' (GH6351)

- Bug correctly handle placements of -inf in Panels when dividing by integer 0 (GH6178)

- DataFrame.shift with axis=1 was raising (GH6371)

- Disabled clipboard tests until release time (run locally with nosetests -A disabled) (GH6048).

- Bug in DataFrame.replace() when passing a nested dict that contained keys not in the values to be replaced (GH6342)

- str.match ignored the na flag (GH6609).

- Bug in take with duplicate columns that were not consolidated (GH6240)

- Bug in interpolate changing dtypes (GH6290)

- Bug in Series.get, was using a buggy access method (GH6383)

- Bug in hdfstore queries of the form where=[('date', '>=', datetime(2013,1,1)), ('date', '<=', datetime(2014,1,1))] (GH6313)

- Bug in DataFrame.dropna with duplicate indices (GH6355)

- Regression in chained getitem indexing with embedded list-like from 0.12 (GH6394)

- Float64Index with nans not comparing correctly (GH6401)

- eval/query expressions with strings containing the @ character will now work (GH6366).

- Bug in Series.reindex when specifying a method with some nan values was inconsistent (noted on a resample) (GH6418)

- Bug in DataFrame.replace() where nested dicts were erroneously depending on the order of dictionary keys and values (GH5338).

- Perf issue in concatting with empty objects (GH3259)

- Clarify sorting of sym_diff on Index objects with NaN values (GH6444)

- Regression in MultiIndex.from_product with a DatetimeIndex as input (GH6439)

- Bug in str.extract when passed a non-default index (GH6348)

- Bug in str.split when passed pat=None and n=1 (GH6466)

- Bug in io.data.DataReader when passed "F-F_Momentum_Factor" and data_source="famafrench" (GH6460)

- Bug in sum of a timedelta64[ns] series (GH6462)

- Bug in resample with a timezone and certain offsets (GH6397)

- Bug in iat/iloc with duplicate indices on a Series (GH6493)

- Bug in read_html where nan’s were incorrectly being used to indicate missing values in text. Should use the empty string for consistency with the rest of pandas (GH5129).

- Bug in read_html tests where redirected invalid URLs would make one test fail (GH6445).

- Bug in multi-axis indexing using .loc on non-unique indices (GH6504)

- Bug that caused _ref_locs corruption when slice indexing across columns axis of a DataFrame (GH6525)

- Regression from 0.13 in the treatment of numpy datetime64 non-ns dtypes in Series creation (GH6529)

- .names attribute of MultiIndexes passed to set_index are now preserved (GH6459).

- Bug in setitem with a duplicate index and an alignable rhs (GH6541)

- Bug in setitem with .loc on mixed integer Indexes (GH6546)

- Bug in pd.read_stata which would use the wrong data types and missing values (GH6327)

- Bug in DataFrame.to_stata that lead to data loss in certain cases, and could be exported using the wrong data types and missing values (GH6335)

- StataWriter replaces missing values in string columns by empty string (GH6802)

- Inconsistent types in Timestamp addition/subtraction (GH6543)

- Bug in preserving frequency across Timestamp addition/subtraction (GH4547)

- Bug in empty list lookup caused IndexError exceptions (GH6536, GH6551)

- Series.quantile raising on an object dtype (GH6555)

- Bug in .xs with a nan in level when dropped (GH6574)

- Bug in fillna with method='bfill/ffill' and datetime64[ns] dtype (GH6587)

- Bug in sql writing with mixed dtypes possibly leading to data loss (GH6509)

- Bug in Series.pop (GH6600)

- Bug in iloc indexing when positional indexer matched Int64Index of the corresponding axis and no reordering happened (GH6612)

- Bug in fillna with limit and value specified

- Bug in DataFrame.to_stata when columns have non-string names (GH4558)

- Bug in compat with np.compress, surfaced in (GH6658)

- Bug in binary operations with a rhs of a Series not aligning (GH6681)

- Bug in DataFrame.to_stata which incorrectly handles nan values and ignores with_index keyword argument (GH6685)

- Bug in resample with extra bins when using an evenly divisible frequency (GH4076)

- Bug in consistency of groupby aggregation when passing a custom function (GH6715)

- Bug in resample when how=None resample freq is the same as the axis frequency (GH5955)

- Bug in downcasting inference with empty arrays (GH6733)

- Bug in obj.blocks on sparse containers dropping all but the last items of same for dtype (GH6748)

- Bug in unpickling NaT (NaTType) (GH4606)

- Bug in DataFrame.replace() where regex metacharacters were being treated as regexs even when regex=False (GH6777).

- Bug in timedelta ops on 32-bit platforms (GH6808)

- Bug in setting a tz-aware index directly via .index (GH6785)

- Bug in expressions.py where numexpr would try to evaluate arithmetic ops (GH6762).

- Bug in Makefile where it didn’t remove Cython generated C files with make clean (GH6768)

- Bug with numpy < 1.7.2 when reading long strings from HDFStore (GH6166)

- Bug in DataFrame._reduce where non bool-like (0/1) integers were being coverted into bools. (GH6806)

- Regression from 0.13 with fillna and a Series on datetime-like (GH6344)

- Bug in adding np.timedelta64 to DatetimeIndex with timezone outputs incorrect results (GH6818)

- Bug in DataFrame.replace() where changing a dtype through replacement would only replace the first occurrence of a value (GH6689)

- Better error message when passing a frequency of ‘MS’ in Period construction (GH5332)

- Bug in Series.__unicode__ when max_rows=None and the Series has more than 1000 rows. (GH6863)

- Bug in groupby.get_group where a datetlike wasn’t always accepted (GH5267)

- Bug in groupBy.get_group created by TimeGrouper raises AttributeError (GH6914)

- Bug in DatetimeIndex.tz_localize and DatetimeIndex.tz_convert converting NaT incorrectly (GH5546)

- Bug in arithmetic operations affecting NaT (GH6873)

- Bug in Series.str.extract where the resulting Series from a single group match wasn’t renamed to the group name

- Bug in DataFrame.to_csv where setting index=False ignored the header kwarg (GH6186)

- Bug in DataFrame.plot and Series.plot, where the legend behave inconsistently when plotting to the same axes repeatedly (GH6678)

- Internal tests for patching __finalize__ / bug in merge not finalizing (GH6923, GH6927)

- accept TextFileReader in concat, which was affecting a common user idiom (GH6583)

- Bug in C parser with leading whitespace (GH3374)

- Bug in C parser with delim_whitespace=True and \r-delimited lines

- Bug in python parser with explicit multi-index in row following column header (GH6893)

- Bug in Series.rank and DataFrame.rank that caused small floats (<1e-13) to all receive the same rank (GH6886)

- Bug in DataFrame.apply with functions that used *args`` or **kwargs and returned an empty result (GH6952)

- Bug in sum/mean on 32-bit platforms on overflows (GH6915)

- Moved Panel.shift to NDFrame.slice_shift and fixed to respect multiple dtypes. (GH6959)

- Bug in enabling subplots=True in DataFrame.plot only has single column raises TypeError, and Series.plot raises AttributeError (GH6951)

- Bug in DataFrame.plot draws unnecessary axes when enabling subplots and kind=scatter (GH6951)

- Bug in read_csv from a filesystem with non-utf-8 encoding (GH6807)

- Bug in iloc when setting / aligning (GH6766)

- Bug causing UnicodeEncodeError when get_dummies called with unicode values and a prefix (GH6885)

- Bug in timeseries-with-frequency plot cursor display (GH5453)

- Bug surfaced in groupby.plot when using a Float64Index (GH7025)

- Stopped tests from failing if options data isn’t able to be downloaded from Yahoo (GH7034)

- Bug in parallel_coordinates and radviz where reordering of class column caused possible color/class mismatch (GH6956)

- Bug in radviz and andrews_curves where multiple values of ‘color’ were being passed to plotting method (GH6956)

- Bug in Float64Index.isin() where containing nan s would make indices claim that they contained all the things (GH7066).

- Bug in DataFrame.boxplot where it failed to use the axis passed as the ax argument (GH3578)

- Bug in the XlsxWriter and XlwtWriter implementations that resulted in datetime columns being formatted without the time (GH7075) were being passed to plotting method

- read_fwf() treats None in colspec like regular python slices. It now reads from the beginning or until the end of the line when colspec contains a None (previously raised a TypeError)

- Bug in cache coherence with chained indexing and slicing; add _is_view property to NDFrame to correctly predict views; mark is_copy on xs only if its an actual copy (and not a view) (GH7084)

- Bug in DatetimeIndex creation from string ndarray with dayfirst=True (GH5917)

- Bug in MultiIndex.from_arrays created from DatetimeIndex doesn’t preserve freq and tz (GH7090)

- Bug in unstack raises ValueError when MultiIndex contains PeriodIndex (GH4342)

- Bug in boxplot and hist draws unnecessary axes (GH6769)

- Regression in groupby.nth() for out-of-bounds indexers (GH6621)

- Bug in quantile with datetime values (GH6965)

- Bug in Dataframe.set_index, reindex and pivot don’t preserve DatetimeIndex and PeriodIndex attributes (GH3950, GH5878, GH6631)

- Bug in MultiIndex.get_level_values doesn’t preserve DatetimeIndex and PeriodIndex attributes (GH7092)

- Bug in Groupby doesn’t preserve tz (GH3950)

- Bug in PeriodIndex partial string slicing (GH6716)

- Bug in the HTML repr of a truncated Series or DataFrame not showing the class name with the large_repr set to ‘info’ (GH7105)

- Bug in DatetimeIndex specifying freq raises ValueError when passed value is too short (GH7098)

- Fixed a bug with the info repr not honoring the display.max_info_columns setting (GH6939)

- Bug PeriodIndex string slicing with out of bounds values (GH5407)

- Fixed a memory error in the hashtable implementation/factorizer on resizing of large tables (GH7157)

- Bug in isnull when applied to 0-dimensional object arrays (GH7176)

- Bug in query/eval where global constants were not looked up correctly (GH7178)

- Bug in recognizing out-of-bounds positional list indexers with iloc and a multi-axis tuple indexer (GH7189)

- Bug in setitem with a single value, multi-index and integer indices (GH7190, GH7218)

- Bug in expressions evaluation with reversed ops, showing in series-dataframe ops (GH7198, GH7192)

- Bug in multi-axis indexing with > 2 ndim and a multi-index (GH7199)

- Fix a bug where invalid eval/query operations would blow the stack (GH5198)

v0.13.1 (February 3, 2014)¶

This is a minor release from 0.13.0 and includes a small number of API changes, several new features, enhancements, and performance improvements along with a large number of bug fixes. We recommend that all users upgrade to this version.

Highlights include:

- Added infer_datetime_format keyword to read_csv/to_datetime to allow speedups for homogeneously formatted datetimes.

- Will intelligently limit display precision for datetime/timedelta formats.

- Enhanced Panel apply() method.

- Suggested tutorials in new Tutorials section.

- Our pandas ecosystem is growing, We now feature related projects in a new Pandas Ecosystem section.

- Much work has been taking place on improving the docs, and a new Contributing section has been added.

- Even though it may only be of interest to devs, we <3 our new CI status page: ScatterCI.

Warning

0.13.1 fixes a bug that was caused by a combination of having numpy < 1.8, and doing chained assignment on a string-like array. Please review the docs, chained indexing can have unexpected results and should generally be avoided.

This would previously segfault:

In [1]: df = DataFrame(dict(A = np.array(['foo','bar','bah','foo','bar'])))

In [2]: df['A'].iloc[0] = np.nan

In [3]: df

Out[3]:

A

0 NaN

1 bar

2 bah

3 foo

4 bar

The recommended way to do this type of assignment is:

In [4]: df = DataFrame(dict(A = np.array(['foo','bar','bah','foo','bar'])))

In [5]: df.ix[0,'A'] = np.nan

In [6]: df

Out[6]:

A

0 NaN

1 bar

2 bah

3 foo

4 bar

Output Formatting Enhancements¶

df.info() view now display dtype info per column (GH5682)

df.info() now honors the option max_info_rows, to disable null counts for large frames (GH5974)

In [7]: max_info_rows = pd.get_option('max_info_rows') In [8]: df = DataFrame(dict(A = np.random.randn(10), ...: B = np.random.randn(10), ...: C = date_range('20130101',periods=10))) ...: In [9]: df.iloc[3:6,[0,2]] = np.nan

# set to not display the null counts In [10]: pd.set_option('max_info_rows',0) In [11]: df.info() <class 'pandas.core.frame.DataFrame'> Int64Index: 10 entries, 0 to 9 Data columns (total 3 columns): A float64 B float64 C datetime64[ns] dtypes: datetime64[ns](1), float64(2)

# this is the default (same as in 0.13.0) In [12]: pd.set_option('max_info_rows',max_info_rows) In [13]: df.info() <class 'pandas.core.frame.DataFrame'> Int64Index: 10 entries, 0 to 9 Data columns (total 3 columns): A 7 non-null float64 B 10 non-null float64 C 7 non-null datetime64[ns] dtypes: datetime64[ns](1), float64(2)

Add show_dimensions display option for the new DataFrame repr to control whether the dimensions print.

In [14]: df = DataFrame([[1, 2], [3, 4]]) In [15]: pd.set_option('show_dimensions', False) In [16]: df Out[16]: 0 1 0 1 2 1 3 4 In [17]: pd.set_option('show_dimensions', True) In [18]: df Out[18]: 0 1 0 1 2 1 3 4 [2 rows x 2 columns]

The ArrayFormatter for datetime and timedelta64 now intelligently limit precision based on the values in the array (GH3401)

Previously output might look like:

age today diff 0 2001-01-01 00:00:00 2013-04-19 00:00:00 4491 days, 00:00:00 1 2004-06-01 00:00:00 2013-04-19 00:00:00 3244 days, 00:00:00

Now the output looks like:

In [19]: df = DataFrame([ Timestamp('20010101'), ....: Timestamp('20040601') ], columns=['age']) ....: In [20]: df['today'] = Timestamp('20130419') In [21]: df['diff'] = df['today']-df['age'] In [22]: df Out[22]: age today diff 0 2001-01-01 2013-04-19 4491 days 1 2004-06-01 2013-04-19 3244 days [2 rows x 3 columns]

API changes¶

Add -NaN and -nan to the default set of NA values (GH5952). See NA Values.

Added Series.str.get_dummies vectorized string method (GH6021), to extract dummy/indicator variables for separated string columns:

In [23]: s = Series(['a', 'a|b', np.nan, 'a|c']) In [24]: s.str.get_dummies(sep='|') Out[24]: a b c 0 1 0 0 1 1 1 0 2 0 0 0 3 1 0 1 [4 rows x 3 columns]

Added the NDFrame.equals() method to compare if two NDFrames are equal have equal axes, dtypes, and values. Added the array_equivalent function to compare if two ndarrays are equal. NaNs in identical locations are treated as equal. (GH5283) See also the docs for a motivating example.

In [25]: df = DataFrame({'col':['foo', 0, np.nan]}).sort() In [26]: df2 = DataFrame({'col':[np.nan, 0, 'foo']}, index=[2,1,0]) In [27]: df.equals(df) Out[27]: True In [28]: import pandas.core.common as com In [29]: com.array_equivalent(np.array([0, np.nan]), np.array([0, np.nan])) Out[29]: True In [30]: np.array_equal(np.array([0, np.nan]), np.array([0, np.nan])) Out[30]: False

DataFrame.apply will use the reduce argument to determine whether a Series or a DataFrame should be returned when the DataFrame is empty (GH6007).

Previously, calling DataFrame.apply an empty DataFrame would return either a DataFrame if there were no columns, or the function being applied would be called with an empty Series to guess whether a Series or DataFrame should be returned:

In [31]: def applied_func(col): ....: print("Apply function being called with: ", col) ....: return col.sum() ....: In [32]: empty = DataFrame(columns=['a', 'b']) In [33]: empty.apply(applied_func) ('Apply function being called with: ', Series([], dtype: float64)) Out[33]: a NaN b NaN dtype: float64

Now, when apply is called on an empty DataFrame: if the reduce argument is True a Series will returned, if it is False a DataFrame will be returned, and if it is None (the default) the function being applied will be called with an empty series to try and guess the return type.

In [34]: empty.apply(applied_func, reduce=True) Out[34]: a NaN b NaN dtype: float64 In [35]: empty.apply(applied_func, reduce=False) Out[35]: Empty DataFrame Columns: [a, b] Index: [] [0 rows x 2 columns]

Prior Version Deprecations/Changes¶

There are no announced changes in 0.13 or prior that are taking effect as of 0.13.1

Deprecations¶

There are no deprecations of prior behavior in 0.13.1

Enhancements¶

pd.read_csv and pd.to_datetime learned a new infer_datetime_format keyword which greatly improves parsing perf in many cases. Thanks to @lexual for suggesting and @danbirken for rapidly implementing. (GH5490, GH6021)

If parse_dates is enabled and this flag is set, pandas will attempt to infer the format of the datetime strings in the columns, and if it can be inferred, switch to a faster method of parsing them. In some cases this can increase the parsing speed by ~5-10x.

# Try to infer the format for the index column df = pd.read_csv('foo.csv', index_col=0, parse_dates=True, infer_datetime_format=True)

date_format and datetime_format keywords can now be specified when writing to excel files (GH4133)

MultiIndex.from_product convenience function for creating a MultiIndex from the cartesian product of a set of iterables (GH6055):

In [36]: shades = ['light', 'dark'] In [37]: colors = ['red', 'green', 'blue'] In [38]: MultiIndex.from_product([shades, colors], names=['shade', 'color']) Out[38]: MultiIndex(levels=[[u'dark', u'light'], [u'blue', u'green', u'red']], labels=[[1, 1, 1, 0, 0, 0], [2, 1, 0, 2, 1, 0]], names=[u'shade', u'color'])

Panel apply() will work on non-ufuncs. See the docs.

In [39]: import pandas.util.testing as tm In [40]: panel = tm.makePanel(5) In [41]: panel Out[41]: <class 'pandas.core.panel.Panel'> Dimensions: 3 (items) x 5 (major_axis) x 4 (minor_axis) Items axis: ItemA to ItemC Major_axis axis: 2000-01-03 00:00:00 to 2000-01-07 00:00:00 Minor_axis axis: A to D In [42]: panel['ItemA'] Out[42]: A B C D 2000-01-03 0.952478 -1.239072 -1.409432 -0.014752 2000-01-04 0.988138 0.139683 1.422986 1.272395 2000-01-05 -0.072608 -0.223019 -2.147855 -1.449567 2000-01-06 -0.550603 2.123692 -1.347533 -1.195524 2000-01-07 -0.938153 0.122273 0.363565 -0.591863 [5 rows x 4 columns]

Specifying an apply that operates on a Series (to return a single element)

In [43]: panel.apply(lambda x: x.dtype, axis='items') Out[43]: A B C D 2000-01-03 float64 float64 float64 float64 2000-01-04 float64 float64 float64 float64 2000-01-05 float64 float64 float64 float64 2000-01-06 float64 float64 float64 float64 2000-01-07 float64 float64 float64 float64 [5 rows x 4 columns]

A similar reduction type operation

In [44]: panel.apply(lambda x: x.sum(), axis='major_axis') Out[44]: ItemA ItemB ItemC A 0.379252 -3.696907 3.709335 B 0.923558 0.504242 4.656781 C -3.118269 -1.545718 3.188329 D -1.979310 -0.758060 -1.436483 [4 rows x 3 columns]

This is equivalent to

In [45]: panel.sum('major_axis') Out[45]: ItemA ItemB ItemC A 0.379252 -3.696907 3.709335 B 0.923558 0.504242 4.656781 C -3.118269 -1.545718 3.188329 D -1.979310 -0.758060 -1.436483 [4 rows x 3 columns]

A transformation operation that returns a Panel, but is computing the z-score across the major_axis

In [46]: result = panel.apply( ....: lambda x: (x-x.mean())/x.std(), ....: axis='major_axis') ....: In [47]: result Out[47]: <class 'pandas.core.panel.Panel'> Dimensions: 3 (items) x 5 (major_axis) x 4 (minor_axis) Items axis: ItemA to ItemC Major_axis axis: 2000-01-03 00:00:00 to 2000-01-07 00:00:00 Minor_axis axis: A to D In [48]: result['ItemA'] Out[48]: A B C D 2000-01-03 1.004994 -1.166509 -0.535027 0.350970 2000-01-04 1.045875 -0.036892 1.393532 1.536326 2000-01-05 -0.170198 -0.334055 -1.037810 -0.970374 2000-01-06 -0.718186 1.588611 -0.492880 -0.736422 2000-01-07 -1.162486 -0.051156 0.672185 -0.180500 [5 rows x 4 columns]

Panel apply() operating on cross-sectional slabs. (GH1148)

In [49]: f = lambda x: ((x.T-x.mean(1))/x.std(1)).T In [50]: result = panel.apply(f, axis = ['items','major_axis']) In [51]: result Out[51]: <class 'pandas.core.panel.Panel'> Dimensions: 4 (items) x 5 (major_axis) x 3 (minor_axis) Items axis: A to D Major_axis axis: 2000-01-03 00:00:00 to 2000-01-07 00:00:00 Minor_axis axis: ItemA to ItemC In [52]: result.loc[:,:,'ItemA'] Out[52]: A B C D 2000-01-03 0.116579 -0.667845 -1.151538 -0.157547 2000-01-04 0.650448 -1.114910 0.841527 0.760706 2000-01-05 -0.987433 -0.438897 -1.154468 -0.015033 2000-01-06 0.494000 1.060450 -0.775993 -1.140165 2000-01-07 -0.363770 0.013169 0.392036 -1.123913 [5 rows x 4 columns]

This is equivalent to the following

In [53]: result = Panel(dict([ (ax,f(panel.loc[:,:,ax])) ....: for ax in panel.minor_axis ])) ....: In [54]: result Out[54]: <class 'pandas.core.panel.Panel'> Dimensions: 4 (items) x 5 (major_axis) x 3 (minor_axis) Items axis: A to D Major_axis axis: 2000-01-03 00:00:00 to 2000-01-07 00:00:00 Minor_axis axis: ItemA to ItemC In [55]: result.loc[:,:,'ItemA'] Out[55]: A B C D 2000-01-03 0.116579 -0.667845 -1.151538 -0.157547 2000-01-04 0.650448 -1.114910 0.841527 0.760706 2000-01-05 -0.987433 -0.438897 -1.154468 -0.015033 2000-01-06 0.494000 1.060450 -0.775993 -1.140165 2000-01-07 -0.363770 0.013169 0.392036 -1.123913 [5 rows x 4 columns]

Performance¶

Performance improvements for 0.13.1

- Series datetime/timedelta binary operations (GH5801)

- DataFrame count/dropna for axis=1

- Series.str.contains now has a regex=False keyword which can be faster for plain (non-regex) string patterns. (GH5879)

- Series.str.extract (GH5944)

- dtypes/ftypes methods (GH5968)

- indexing with object dtypes (GH5968)

- DataFrame.apply (GH6013)

- Regression in JSON IO (GH5765)

- Index construction from Series (GH6150)

Experimental¶

There are no experimental changes in 0.13.1

Bug Fixes¶

See V0.13.1 Bug Fixes for an extensive list of bugs that have been fixed in 0.13.1.

See the full release notes or issue tracker on GitHub for a complete list of all API changes, Enhancements and Bug Fixes.

v0.13.0 (January 3, 2014)¶

This is a major release from 0.12.0 and includes a number of API changes, several new features and enhancements along with a large number of bug fixes.

Highlights include:

- support for a new index type Float64Index, and other Indexing enhancements

- HDFStore has a new string based syntax for query specification

- support for new methods of interpolation

- updated timedelta operations

- a new string manipulation method extract

- Nanosecond support for Offsets

- isin for DataFrames

Several experimental features are added, including:

- new eval/query methods for expression evaluation

- support for msgpack serialization

- an i/o interface to Google’s BigQuery

Their are several new or updated docs sections including:

- Comparison with SQL, which should be useful for those familiar with SQL but still learning pandas.

- Comparison with R, idiom translations from R to pandas.

- Enhancing Performance, ways to enhance pandas performance with eval/query.

Warning

In 0.13.0 Series has internally been refactored to no longer sub-class ndarray but instead subclass NDFrame, similar to the rest of the pandas containers. This should be a transparent change with only very limited API implications. See Internal Refactoring

API changes¶

read_excel now supports an integer in its sheetname argument giving the index of the sheet to read in (GH4301).

Text parser now treats anything that reads like inf (“inf”, “Inf”, “-Inf”, “iNf”, etc.) as infinity. (GH4220, GH4219), affecting read_table, read_csv, etc.

pandas now is Python 2/3 compatible without the need for 2to3 thanks to @jtratner. As a result, pandas now uses iterators more extensively. This also led to the introduction of substantive parts of the Benjamin Peterson’s six library into compat. (GH4384, GH4375, GH4372)

pandas.util.compat and pandas.util.py3compat have been merged into pandas.compat. pandas.compat now includes many functions allowing 2/3 compatibility. It contains both list and iterator versions of range, filter, map and zip, plus other necessary elements for Python 3 compatibility. lmap, lzip, lrange and lfilter all produce lists instead of iterators, for compatibility with numpy, subscripting and pandas constructors.(GH4384, GH4375, GH4372)

Series.get with negative indexers now returns the same as [] (GH4390)

Changes to how Index and MultiIndex handle metadata (levels, labels, and names) (GH4039):

# previously, you would have set levels or labels directly index.levels = [[1, 2, 3, 4], [1, 2, 4, 4]] # now, you use the set_levels or set_labels methods index = index.set_levels([[1, 2, 3, 4], [1, 2, 4, 4]]) # similarly, for names, you can rename the object # but setting names is not deprecated index = index.set_names(["bob", "cranberry"]) # and all methods take an inplace kwarg - but return None index.set_names(["bob", "cranberry"], inplace=True)

All division with NDFrame objects is now truedivision, regardless of the future import. This means that operating on pandas objects will by default use floating point division, and return a floating point dtype. You can use // and floordiv to do integer division.

Integer division

In [3]: arr = np.array([1, 2, 3, 4]) In [4]: arr2 = np.array([5, 3, 2, 1]) In [5]: arr / arr2 Out[5]: array([0, 0, 1, 4]) In [6]: Series(arr) // Series(arr2) Out[6]: 0 0 1 0 2 1 3 4 dtype: int64

True Division

In [7]: pd.Series(arr) / pd.Series(arr2) # no future import required Out[7]: 0 0.200000 1 0.666667 2 1.500000 3 4.000000 dtype: float64

Infer and downcast dtype if downcast='infer' is passed to fillna/ffill/bfill (GH4604)

__nonzero__ for all NDFrame objects, will now raise a ValueError, this reverts back to (GH1073, GH4633) behavior. See gotchas for a more detailed discussion.

This prevents doing boolean comparison on entire pandas objects, which is inherently ambiguous. These all will raise a ValueError.

if df: .... df1 and df2 s1 and s2

Added the .bool() method to NDFrame objects to facilitate evaluating of single-element boolean Series:

In [1]: Series([True]).bool() Out[1]: True In [2]: Series([False]).bool() Out[2]: False In [3]: DataFrame([[True]]).bool() Out[3]: True In [4]: DataFrame([[False]]).bool() Out[4]: False

All non-Index NDFrames (Series, DataFrame, Panel, Panel4D, SparsePanel, etc.), now support the entire set of arithmetic operators and arithmetic flex methods (add, sub, mul, etc.). SparsePanel does not support pow or mod with non-scalars. (GH3765)

Series and DataFrame now have a mode() method to calculate the statistical mode(s) by axis/Series. (GH5367)

Chained assignment will now by default warn if the user is assigning to a copy. This can be changed with the option mode.chained_assignment, allowed options are raise/warn/None. See the docs.

In [5]: dfc = DataFrame({'A':['aaa','bbb','ccc'],'B':[1,2,3]}) In [6]: pd.set_option('chained_assignment','warn')

The following warning / exception will show if this is attempted.

In [7]: dfc.loc[0]['A'] = 1111

Traceback (most recent call last) ... SettingWithCopyWarning: A value is trying to be set on a copy of a slice from a DataFrame. Try using .loc[row_index,col_indexer] = value instead

Here is the correct method of assignment.

In [8]: dfc.loc[0,'A'] = 11 In [9]: dfc Out[9]: A B 0 11 1 1 bbb 2 2 ccc 3 [3 rows x 2 columns]

- Panel.reindex has the following call signature Panel.reindex(items=None, major_axis=None, minor_axis=None, **kwargs)

to conform with other NDFrame objects. See Internal Refactoring for more information.

- Series.argmin and Series.argmax are now aliased to Series.idxmin and Series.idxmax. These return the index of the

min or max element respectively. Prior to 0.13.0 these would return the position of the min / max element. (GH6214)

Prior Version Deprecations/Changes¶

These were announced changes in 0.12 or prior that are taking effect as of 0.13.0

- Remove deprecated Factor (GH3650)

- Remove deprecated set_printoptions/reset_printoptions (GH3046)

- Remove deprecated _verbose_info (GH3215)

- Remove deprecated read_clipboard/to_clipboard/ExcelFile/ExcelWriter from pandas.io.parsers (GH3717) These are available as functions in the main pandas namespace (e.g. pd.read_clipboard)

- default for tupleize_cols is now False for both to_csv and read_csv. Fair warning in 0.12 (GH3604)

- default for display.max_seq_len is now 100 rather then None. This activates truncated display (”...”) of long sequences in various places. (GH3391)

Deprecations¶

Deprecated in 0.13.0

- deprecated iterkv, which will be removed in a future release (this was an alias of iteritems used to bypass 2to3‘s changes). (GH4384, GH4375, GH4372)

- deprecated the string method match, whose role is now performed more idiomatically by extract. In a future release, the default behavior of match will change to become analogous to contains, which returns a boolean indexer. (Their distinction is strictness: match relies on re.match while contains relies on re.search.) In this release, the deprecated behavior is the default, but the new behavior is available through the keyword argument as_indexer=True.

Indexing API Changes¶

Prior to 0.13, it was impossible to use a label indexer (.loc/.ix) to set a value that was not contained in the index of a particular axis. (GH2578). See the docs

In the Series case this is effectively an appending operation

In [10]: s = Series([1,2,3])

In [11]: s

Out[11]:

0 1

1 2

2 3

dtype: int64

In [12]: s[5] = 5.

In [13]: s

Out[13]:

0 1

1 2

2 3

5 5

dtype: float64

In [14]: dfi = DataFrame(np.arange(6).reshape(3,2),

....: columns=['A','B'])

....:

In [15]: dfi

Out[15]:

A B

0 0 1

1 2 3

2 4 5

[3 rows x 2 columns]

This would previously KeyError

In [16]: dfi.loc[:,'C'] = dfi.loc[:,'A']

In [17]: dfi

Out[17]:

A B C

0 0 1 0

1 2 3 2

2 4 5 4

[3 rows x 3 columns]

This is like an append operation.

In [18]: dfi.loc[3] = 5

In [19]: dfi

Out[19]:

A B C

0 0 1 0

1 2 3 2

2 4 5 4

3 5 5 5

[4 rows x 3 columns]

A Panel setting operation on an arbitrary axis aligns the input to the Panel

In [20]: p = pd.Panel(np.arange(16).reshape(2,4,2),

....: items=['Item1','Item2'],

....: major_axis=pd.date_range('2001/1/12',periods=4),

....: minor_axis=['A','B'],dtype='float64')

....:

In [21]: p

Out[21]:

<class 'pandas.core.panel.Panel'>

Dimensions: 2 (items) x 4 (major_axis) x 2 (minor_axis)

Items axis: Item1 to Item2

Major_axis axis: 2001-01-12 00:00:00 to 2001-01-15 00:00:00

Minor_axis axis: A to B

In [22]: p.loc[:,:,'C'] = Series([30,32],index=p.items)

In [23]: p

Out[23]:

<class 'pandas.core.panel.Panel'>

Dimensions: 2 (items) x 4 (major_axis) x 3 (minor_axis)

Items axis: Item1 to Item2

Major_axis axis: 2001-01-12 00:00:00 to 2001-01-15 00:00:00

Minor_axis axis: A to C

In [24]: p.loc[:,:,'C']

Out[24]:

Item1 Item2

2001-01-12 30 32

2001-01-13 30 32

2001-01-14 30 32

2001-01-15 30 32

[4 rows x 2 columns]

Float64Index API Change¶

Added a new index type, Float64Index. This will be automatically created when passing floating values in index creation. This enables a pure label-based slicing paradigm that makes [],ix,loc for scalar indexing and slicing work exactly the same. See the docs, (GH263)

Construction is by default for floating type values.

In [25]: index = Index([1.5, 2, 3, 4.5, 5]) In [26]: index Out[26]: Float64Index([1.5, 2.0, 3.0, 4.5, 5.0], dtype='float64') In [27]: s = Series(range(5),index=index) In [28]: s Out[28]: 1.5 0 2.0 1 3.0 2 4.5 3 5.0 4 dtype: int32

Scalar selection for [],.ix,.loc will always be label based. An integer will match an equal float index (e.g. 3 is equivalent to 3.0)

In [29]: s[3] Out[29]: 2 In [30]: s.ix[3] Out[30]: 2 In [31]: s.loc[3] Out[31]: 2

The only positional indexing is via iloc

In [32]: s.iloc[3] Out[32]: 3

A scalar index that is not found will raise KeyError

Slicing is ALWAYS on the values of the index, for [],ix,loc and ALWAYS positional with iloc

In [33]: s[2:4] Out[33]: 2 1 3 2 dtype: int32 In [34]: s.ix[2:4] Out[34]: 2 1 3 2 dtype: int32 In [35]: s.loc[2:4] Out[35]: 2 1 3 2 dtype: int32 In [36]: s.iloc[2:4] Out[36]: 3.0 2 4.5 3 dtype: int32

In float indexes, slicing using floats are allowed

In [37]: s[2.1:4.6] Out[37]: 3.0 2 4.5 3 dtype: int32 In [38]: s.loc[2.1:4.6] Out[38]: 3.0 2 4.5 3 dtype: int32

Indexing on other index types are preserved (and positional fallback for [],ix), with the exception, that floating point slicing on indexes on non Float64Index will now raise a TypeError.

In [1]: Series(range(5))[3.5] TypeError: the label [3.5] is not a proper indexer for this index type (Int64Index) In [1]: Series(range(5))[3.5:4.5] TypeError: the slice start [3.5] is not a proper indexer for this index type (Int64Index)

Using a scalar float indexer will be deprecated in a future version, but is allowed for now.

In [3]: Series(range(5))[3.0] Out[3]: 3

HDFStore API Changes¶

Query Format Changes. A much more string-like query format is now supported. See the docs.

In [39]: path = 'test.h5' In [40]: dfq = DataFrame(randn(10,4), ....: columns=list('ABCD'), ....: index=date_range('20130101',periods=10)) ....: In [41]: dfq.to_hdf(path,'dfq',format='table',data_columns=True)

Use boolean expressions, with in-line function evaluation.

In [42]: read_hdf(path,'dfq', ....: where="index>Timestamp('20130104') & columns=['A', 'B']") ....: Out[42]: A B 2013-01-05 -1.392054 1.153922 2013-01-06 -0.881047 0.295080 2013-01-07 -1.407085 0.126781 2013-01-08 -0.838843 0.553921 2013-01-09 1.529401 0.205455 2013-01-10 0.299071 1.076541 [6 rows x 2 columns]

Use an inline column reference

In [43]: read_hdf(path,'dfq', ....: where="A>0 or C>0") ....: Out[43]: A B C D 2013-01-01 1.126386 0.247112 0.121172 0.298984 2013-01-03 0.581073 2.763844 0.399325 0.668488 2013-01-04 -0.275774 0.500483 0.863065 -1.051628 2013-01-05 -1.392054 1.153922 1.181944 0.391371 2013-01-06 -0.881047 0.295080 1.863801 -1.712274 2013-01-07 -1.407085 0.126781 0.003760 -1.268994 2013-01-09 1.529401 0.205455 0.313013 0.866521 2013-01-10 0.299071 1.076541 0.363177 1.893680 [8 rows x 4 columns]

the format keyword now replaces the table keyword; allowed values are fixed(f) or table(t) the same defaults as prior < 0.13.0 remain, e.g. put implies fixed format and append implies table format. This default format can be set as an option by setting io.hdf.default_format.